Abstract

Large language model (LLM) agents increasingly rely on external tools and structured workflows to accomplish complex tasks. While recent work has emphasized improving reasoning quality and prompting strategies, the orchestration layer responsible for tool selection, execution sequencing, escalation, and constraint handling remains largely heuristic.

This work introduces Agent Learning, a framework that formalizes orchestration in tool-augmented LLM systems as an execution-aware policy optimization problem. An agent is modeled as a triplet consisting of a fixed stochastic reasoning module, a set of external tools, and an orchestration policy mapping system states to actions.

Empirical evaluation in a controlled synthetic tool environment demonstrates that learned policies consistently outperform heuristic baselines in minimizing execution cost and improving constraint satisfaction across multiple random seeds.

Methods

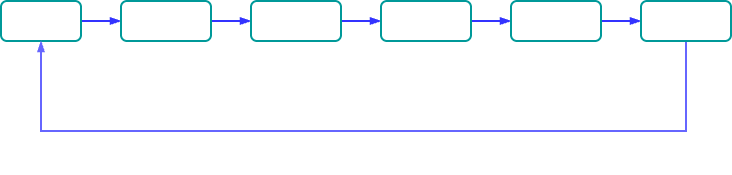

The agent is formulated as a closed-loop system in which an orchestration policy π maps the current system state to an action. The selected action is executed through the reasoning module and tool environment, producing an updated state and an associated execution cost. This feedback process defines a sequential decision problem over trajectories rather than isolated steps, enabling optimisation of long-horizon behaviour under cost and constraint considerations.

Figure 1. illustrates this closed-loop interaction between policy, execution, and environment.

Implications

Framing orchestration as a learned policy exposes limitations in current heuristic agent systems. Static routing strategies optimise for immediate outcomes and fail to account for long-horizon execution cost, constraint violations, and recovery dynamics under failure.

In contrast, a learned orchestration policy enables optimisation over trajectories rather than individual steps. This permits explicit trade-offs between latency, monetary cost, and task success, while supporting adaptive behaviour under changing tool performance and environmental conditions.

This formulation also establishes a clear interface between reasoning and execution. The reasoning module remains fixed, while the orchestration layer becomes the primary locus of optimisation. As a result, improvements in system performance can be achieved without modifying the underlying model, enabling modular and scalable system design.